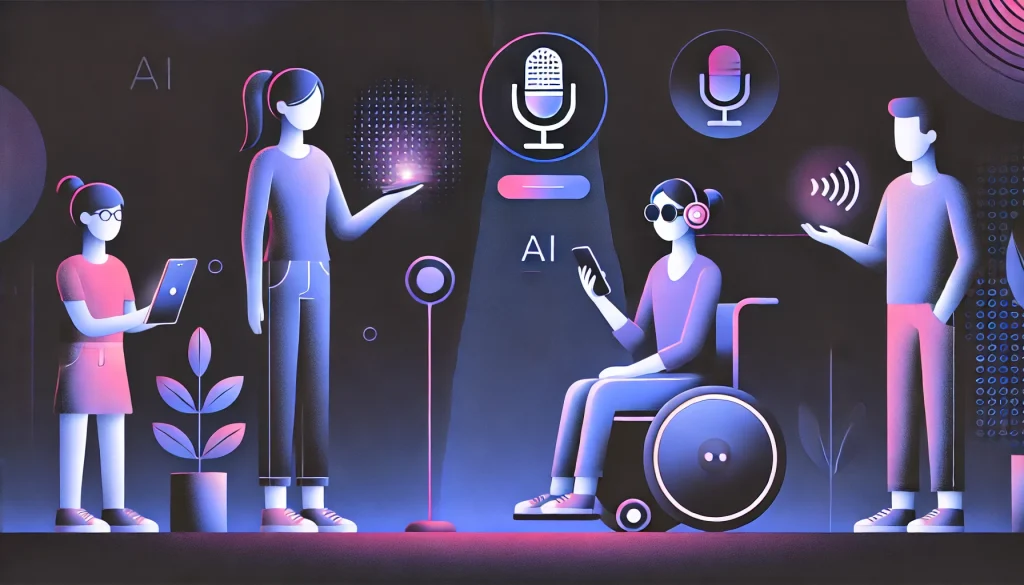

Artificial Intelligence (AI) is being hailed as a powerful ally for people with disabilities. With the ability to transcribe, describe, translate, and personalize, AI tools are opening doors that were previously closed. From automatic captions to voice-enabled interfaces, the promise of a more accessible digital world feels closer than ever. But alongside the potential comes a growing concern: is AI solving the problem, or just changing its shape?

How AI Is Making Things Easier

For many people with disabilities, AI is already improving daily life. Voice assistants like Siri, Alexa, and Google Assistant allow users to perform tasks like sending texts or setting alarms using only their voice. Real-time captioning, available on platforms like Google Meet and YouTube, helps people who are deaf or hard of hearing follow conversations and events without needing human interpreters.

Google’s Project Euphonia is taking this a step further by training AI to understand people with non-standard speech – such as those with neurological conditions or heavy accents. The company has recently expanded the project to support multiple African languages, improving access for communities that have long been overlooked in tech development. Chrome’s latest update also includes AI-powered OCR (Optical Character Recognition), which reads text in scanned PDFs aloud. This is a game-changer for blind users and screen reader users who previously couldn’t access scanned documents.

Another major development is in AI-generated image descriptions. Tools like Microsoft’s Seeing AI or Facebook’s auto-alt text feature can describe what’s in a photo to someone who’s blind or has low vision. One user with aphantasia, a condition where people can’t form mental images, shared how AI descriptions helped them understand visual scenes for the first time. In this way, AI is helping people not just navigate digital spaces, but better experience and interpret the world around them.

The Challenges Beneath the Surface

Yet for every success story, there are also cautionary tales. A 2024 Financial Times article highlighted how a blind user encountered an AI tool that described bullet points on a website as “a toilet”—a bizarre and confusing misinterpretation that came from an image being misread. These kinds of errors are not rare. And when users depend on AI tools to interpret online content, mistakes can quickly erode trust.

Another concern is the growing reliance on automated accessibility overlays—software that promises to fix accessibility issues on websites instantly. Companies like AccessiBe have become popular by claiming to make websites compliant with laws like the Americans with Disabilities Act (ADA) in just minutes. But advocates argue that these overlays often don’t work properly and can actually make things worse. Some even block screen readers or interfere with user navigation. This has led to a wave of lawsuits in the United States, with over 4,500 digital accessibility cases filed in 2023 alone. Many of them were against websites using AI overlays that failed to provide a truly usable experience.

Bias in the System

A bigger, structural issue lies in the way AI is built. Most AI systems learn by analyzing large sets of data. If those data sets don’t include examples of people with disabilities—or if they overrepresent certain groups and underrepresent others—the system will be biased. This has been especially visible in facial recognition systems. A study showed that facial recognition tools had an error rate of 35% when identifying darker-skinned women, compared to under 1% for lighter-skinned men. That’s a clear indication of how uneven AI performance can be when training data lacks diversity.

This concern also applies to assistive technologies. In a 2024 survey by the accessibility platform Fable, 91% of assistive tech users said they were paying close attention to developments in AI. But only 7% felt that AI systems were being designed with input from people like them. That’s a huge gap between interest and inclusion. Many fear that their needs are being sidelined in the rush to build and deploy new tools.

Overpromising and Under Delivering

It’s easy to get excited about AI because of its speed and scale. Automated tools can process thousands of pages, identify missing alt text, or flag accessibility issues in a fraction of the time it would take a human. But this speed can also lead to shortcuts. When companies rely solely on automation to fix accessibility problems, they often miss the context and nuance that only humans can catch.

A growing number of experts argue that AI should not be seen as a one-click fix. The European Accessibility Act, which came into effect in June 2025, emphasizes that digital products and services must be designed with accessibility in mind from the start—not patched up later with overlays. The law requires banks, e-commerce platforms, and public services to meet accessibility standards or face penalties. It’s part of a larger global push to ensure that technology works for everyone, not just the majority.

What the Future Looks Like

Despite the risks, companies are starting to take accessibility more seriously. Apple recently introduced new iOS features including better braille support, customizable voice models, and live captions for FaceTime. Google is working on inclusive language models for Android that better understand diverse speech patterns. Microsoft, meanwhile, is promoting responsible AI development by encouraging transparency in how data is collected and used.

Researchers are also exploring ways to blend AI tools with human insight. A tool called CodeA11y, for example, helps developers write accessibility-friendly code using AI suggestions—but it also explains why a particular change is needed, so developers learn along the way. Similarly, companies like Perfecto are integrating AI-based accessibility testing with manual audits to ensure better coverage and fewer errors.

Conclusion

AI has enormous potential to improve digital accessibility. It can speed up fixes, power new features, and make online content more inclusive for millions of people. But it can also introduce new problems—especially when tools are designed without input from the people they’re meant to serve.

The key is to combine the power of AI with the judgment of humans. Designers, developers, and product teams must include people with disabilities at every stage of the process—from research and testing to deployment and feedback. Governments must create strong guidelines to ensure that AI tools are safe, effective, and fair. And companies must resist the temptation of quick fixes, instead investing in long-term solutions that prioritize inclusion.

In short, AI can absolutely help solve accessibility challenges—but only if we build it right.